Do you also think your Spark job fails because Spark is slow? This is not correct. It failed because your infrastructure could not keep up. When the data is small, everything works well. You run the jobs, results appear, and everything seems stable. However, with the increase in workload, executors crash, jobs slow down, and scaling becomes unpredictable. It is definitely not because of Spark. It is where we have to know about Spark on Kubernetes.

In this article, we will learn how to deploy Spark on Kubernetes, understand Apache Spark, and figure out how to easily run it.

Let’s learn a setup that works under pressure!

Understanding Spark on Kubernetes

Apache Spark is able to process large amounts of data in parallel. Kubernetes always manages containers and resources across machines. Running Spark on Kubernetes means you stop managing Spark clusters and start running Spark jobs as temporary workloads.

When you run Apache Spark on Kubernetes, you are not creating a permanent Spark cluster. You are submitting jobs that run inside Kubernetes as temporary workloads.

How to Deploy Spark on Kubernetes?

You don’t need to install Spark in a traditional sense to deploy Spark on Kubernetes. You just have to submit jobs to Kubernetes. Here is an example for you:

./bin/spark-submit \

--master k8s://https://<k8s-api> \

--deploy-mode cluster \

--name data-job \

--class com.example.Main \

local:///opt/spark/app.jarWhen you deploy Spark on Kubernetes, the following things are happening backstage:

- Kubernetes launches a driver pod

- The driver requests executor pods

- Executor processes data in parallel

- Once the job finishes, pods are removed

Running Spark on Kubernetes Service Account

apiVersion: v1

kind: ServiceAccount

metadata:

name: spark-sa

namespace: default

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: spark-role

rules:

- apiGroups: [""]

resources: ["pods", "services", "configmaps"]

verbs: ["create", "get", "list", "watch", "delete"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: spark-role-binding

subjects:

- kind: ServiceAccount

name: spark-sa

roleRef:

kind: Role

name: spark-role

apiGroup: rbac.authorization.k8s.ioUse it in spark-submit

--conf spark.kubernetes.authenticate.driver.serviceAccountName=spark-saSpark Kubernetes Configuration

--conf spark.executor.instances=3 \

--conf spark.executor.memory=2g \

--conf spark.executor.cores=1 \

--conf spark.driver.memory=1g \

--conf spark.kubernetes.container.image=apache/spark:latest \

--conf spark.kubernetes.namespace=default \

--conf spark.kubernetes.executor.request.cores=0.5 \

--conf spark.kubernetes.executor.limit.cores=1Debugging Failed Spark Jobs on Kubernetes

It is how you can debug failed Spark jobs on Kubernetes:

Check Driver Logs

kubectl logs <driver-pod-name>Check Executor Pods

kubectl get pods

kubectl describe pod <executor-pod>Helm-Based Deployment

Many production teams don’t use raw YAML.

helm repo add bitnami https://charts.bitnami.com/bitnami

helm install spark bitnami/sparkWhen NOT to Run Spark on Kubernetes?

Avoid it when:

- Workloads are very small

- You need ultra-low latency streaming

- Team lacks Kubernetes experience

Sometimes traditional Spark is still simpler.

Why Spark on Kubernetes Is Getting Popular Among Teams?

You know that traditional Spark clusters come with overhead. They need constant management, even when idle. When you use Spark on Kubernetes, the model changes. You gain the following things:

- On-demand resource usage

- Automatic scaling

- Better isolation between jobs

- Easier integration with cloud systems

It means you focus on running the workload rather than maintaining the infrastructure.

Spark on Kubernetes vs Traditional Spark

| Area | Traditional Spark | Spark on Kubernetes |

|---|---|---|

| Setup | Fixed cluster | Job-based execution |

| Scaling | Manual | Automatic |

| Resource use | Always active | On demand |

| Maintenance | Continuous | Reduced |

| Flexibility | Limited | High |

Where Most Deployments Go Wrong?

This is typically the aspect that most articles miss.

1. Mistakenly Thinking It Is a Static Cluster

Cluster management with Spark on Kubernetes shouldn’t be a fixed idea.

When you think that way, you fail yourself.

2. Incorrect Resource Allocation

If the memory is too low, the programme crashes. If memory is high, resources are wasted.

3. Overlooking the Network

Communication between executors is very network-intensive.

4. Relying on Local Storage

Pods are temporary. Local data disappears.

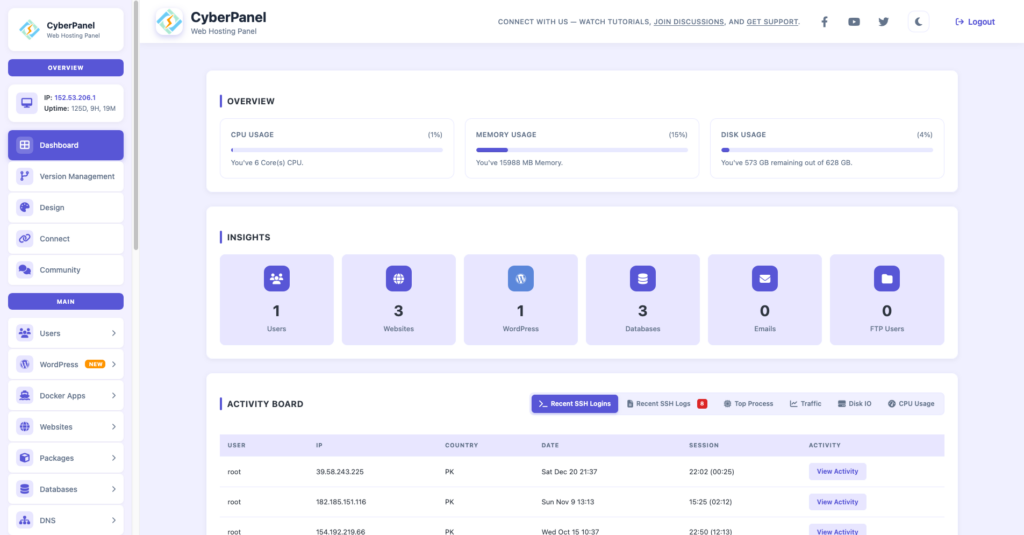

Role of CyberPanel in Big Data Environments

CyberPanel is a free and open-source web hosting control panel. It isn’t really part of Spark execution. However, it does take care of the ecosystem around it.

It chiefly supports:

- server management

- domain configuration

- SSL setup

- application hosting dashboards

In a full-stack solution, Spark manages data processing, Kubernetes manages computing resources, and CyberPanel is used for the administration of infrastructure access.

Conclusion

It is fine to run Spark in conventional clusters, but this method is no longer the most efficient one. Today, systems are expected to be flexible, scalable, and capable of fast development and deployment.

By running Spark Kubernetes, you are getting closer to the cloud-native paradigm. Wherein jobs scale automatically, resources are always efficiently used, and infrastructure management is much easier.

Begin by running a small Spark job on Kubernetes today. Try out scaling, keep an eye on the job, and slowly switch over to production workloads. After you get used to this flexibility, classic cluster management will seem out-of-date.

FAQs

Is Spark on Kubernetes suitable for real-time processing?

Yes. It can handle streaming workloads when configured with proper resource allocation and streaming frameworks.

What storage works best with Spark on Kubernetes?

Object storage, like S3 or distributed systems like HDFS, is commonly used.

Does Spark on Kubernetes require cluster admin access?

Yes, you need permission to deploy pods, services, and manage resources in the cluster.