When you serve static assets with an efficient cache policy, the user’s browser will store these files locally and less time will be needed to load the page. Normally as soon as a page is loaded, all the resources of that page, such as HTML, CSS, JavaScript, and images, must be downloaded.

Browser caching allows the browser to retrieve static assets like CSS, JavaScript, and images from its local cache. As a result, the pages load more quickly. Cached content means subsequent visits to a page will be faster than a user’s first visit, but not on the first visit.

What is cache?

A cache is a high-speed data storage layer in computing that saves a portion of data that is often temporary in nature so that subsequent requests for that data can be served up faster than accessing the data’s primary storage location. Caching allows you to quickly reuse data that has been previously retrieved or computed.

How does caching actually works?

The data in a cache is usually kept in rapid access hardware like RAM (random-access memory), but it can also be utilized in conjunction with a software component. The basic goal of a cache is to speed up data retrieval by eliminating the need to contact the slower storage layer behind it.

In contrast to databases, which store entire and long-lasting data, a cache often stores a part of the data transiently.

Advantages of caching

Let go through some advantages of caching.

Enhance the performance of your application

Reading data from an in-memory cache is incredibly quick because memory is orders of magnitude faster than disc (magnetic or SSD) (sub-millisecond). This substantially faster data access enhances the application’s overall performance.

Backend Load Should Be Reduced

By shifting a portion of the read load from the backend database to the in-memory layer, caching reduces the stress on your database, keeping it from suffering weak performance under heavy load or even crashing under spikes.

Hotspots in the database should be eliminated

Many applications tend to retrieve a subset of data more frequently than the rest. As a result, hot spots may occur in the database, and you may need to overprovision its resources based on the throughput requirements for the most frequently used data. For frequently accessed data, an in-memory cache reduces overprovisioning requirements while delivering fast, predictable performance.

Reduce the cost of your database

Input/output operations per second (IOPS) can be performed by a single cache instance, allowing it to replace multiple database instances and reduce costs significantly. That is crucial if the primary database charges by the amount of data. There could be a large price difference under certain conditions.

Performance that can be predicted

Dealing with surges in application utilization is a prevalent problem in modern systems. Increased database load causes longer data retrieval times, making overall application performance unpredictable. This problem can be solved by using a high-throughput in-memory cache.

Increase the number of people who read (IOPS)

In-memory systems have substantially higher request rates (IOPS) than a comparable disk-based database, in addition to having reduced latency. When utilized as a distributed side-cache, a single instance can fulfil hundreds or even thousands of requests per second.

What is asset caching?

Caching is a straightforward notion. When a browser downloads an asset, it uses the server’s policy to determine whether or not it should download it again on subsequent visits. If the server doesn’t provide a policy, the browser defaults, which usually means caching files for that session.

What is static asset caching?

specify how long the browser should temporarily retain or cache the resource. Any subsequent requests for that resource are served from the browser’s local copy rather than from the network.

Any time you have a visitor of your site fetch a fresh version of something that isn’t already cached within the browser or server, you’re using an inefficient cache policy. When, in actuality, you may be serving them cached and ready-to-use saved content.

Also read: How To Fix Broken Permalinks in WordPress

What is efficient cache policy?

If your static files do not change (or you have an acceptable cache busting mechanism), we suggest setting your cache policy to 6 months or 1 year.

Elements like global CSS/JS files, logos, graphics, and so on rarely change on finished websites, so 6 months or a year is a fair cache expiry to work with.

Of course, if you frequently alter the above static files, you can choose a shorter cache expiry time as long as it is greater than 3 months.

Serve static assets with an efficient cache policy

There are multiple ways we can servce static files using efficient cache policy, we will discuss 3 methods

- Using .htaccess file if you are using LiteSpeed Enterprise or Apache

- Using LiteSpeed Cache

- Using W3 Total Cache plugin

Serve Static Assets using .htaccess file on Apache and LiteSpeed Enterprise

Note: If you are using OpenLiteSpeed or NGINX, this method will not work.

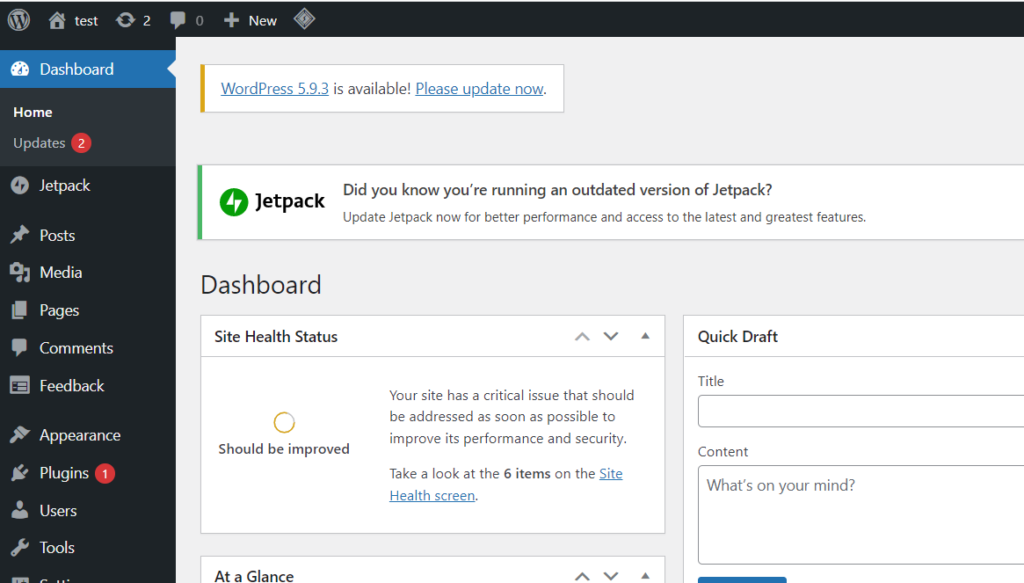

Login to your WordPress Dashboard

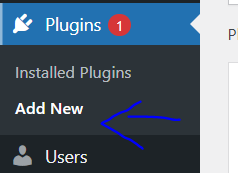

Click on Plugins -> Add new from the left hand side menu

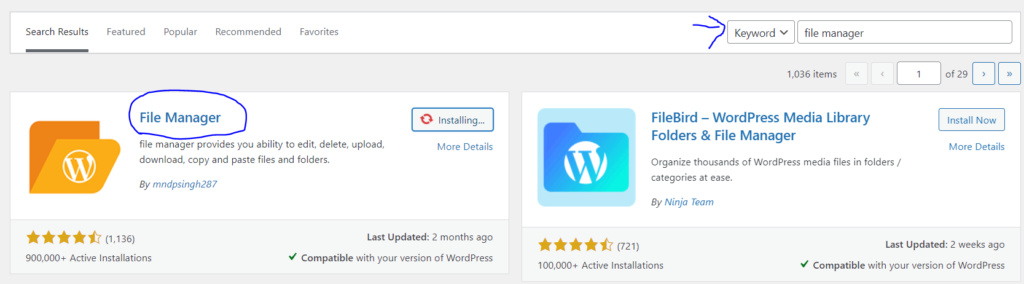

Search for “File manager”. Install and activate the plugin

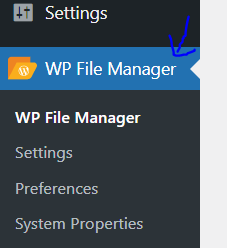

Click on “File Manager” from the left hand side menu

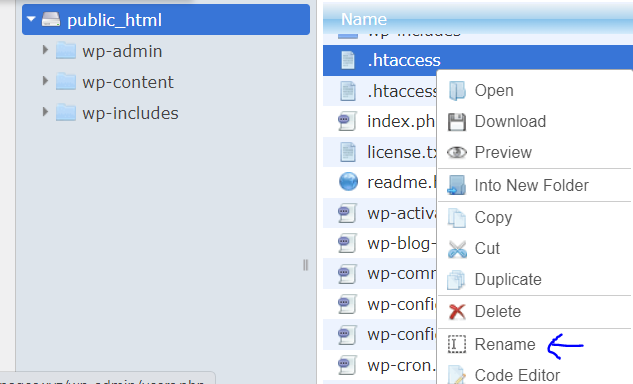

In public_html folder, right click on .htaccess and click on rename

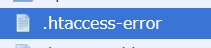

Change the file name (.htaccess-error)

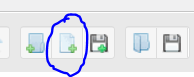

Click on “new file” icon from top

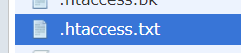

Name the file “.htacess”

Paste the following code and save and close

<IfModule mod_expires.c>

ExpiresActive On

# CSS, JavaScript

ExpiresByType text/css "access plus 1 year"

ExpiresByType text/javascript "access plus 1 year"

ExpiresByType application/javascript "access plus 1 year"

# Fonts

ExpiresByType font/ttf "access plus 1 year"

ExpiresByType font/otf "access plus 1 year"

ExpiresByType font/woff "access plus 1 year"

ExpiresByType font/woff2 "access plus 1 year"

ExpiresByType application/font-woff "access plus 1 year"

# Images

ExpiresByType image/jpeg "access plus 1 year"

ExpiresByType image/gif "access plus 1 year"

ExpiresByType image/png "access plus 1 year"

ExpiresByType image/webp "access plus 1 year"

ExpiresByType image/svg+xml "access plus 1 year"

ExpiresByType image/x-icon "access plus 1 year"

# Video

ExpiresByType video/webm "access plus 1 year"

ExpiresByType video/mp4 "access plus 1 year"

ExpiresByType video/mpeg "access plus 1 year"

# Others

ExpiresByType application/pdf "access plus 1 year"

ExpiresByType image/vnd.microsoft.icon "access plus 1 year"

</IfModule>Serve Static Assets using LiteSpeed Cache

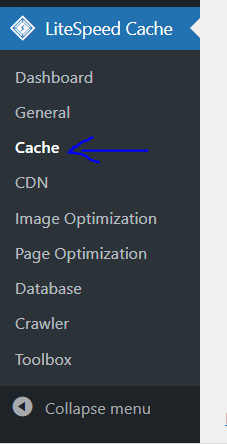

You need to install and activate LiteSpeed Cache plugin, once installed, follow the guide below:

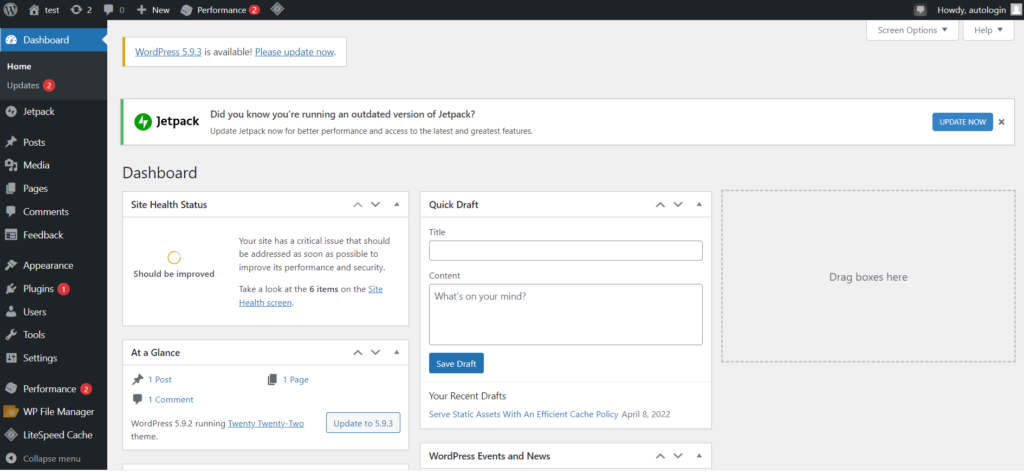

- Go to your WordPress Dashboard

- Click on LiteSpeed Cache -> Cache from the left hand side menu

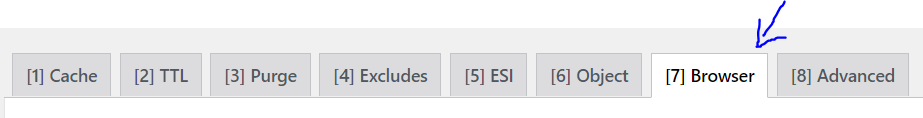

- Click on the “Browser” tab from the top

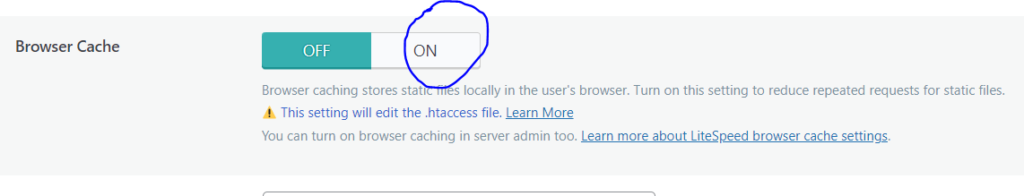

- Turn on the “Browser Cache” toggle

- Click “Save Changes”

Serve Static Assets using W3 Total Cache

Install and activate W3 Total Cache plugin first and then follow the guide below.

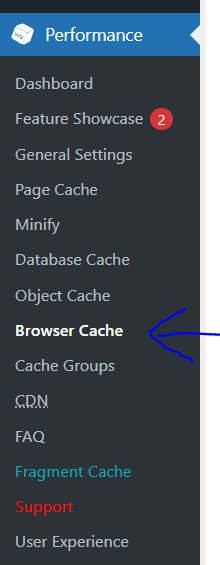

- Go to your WordPress Dashboard

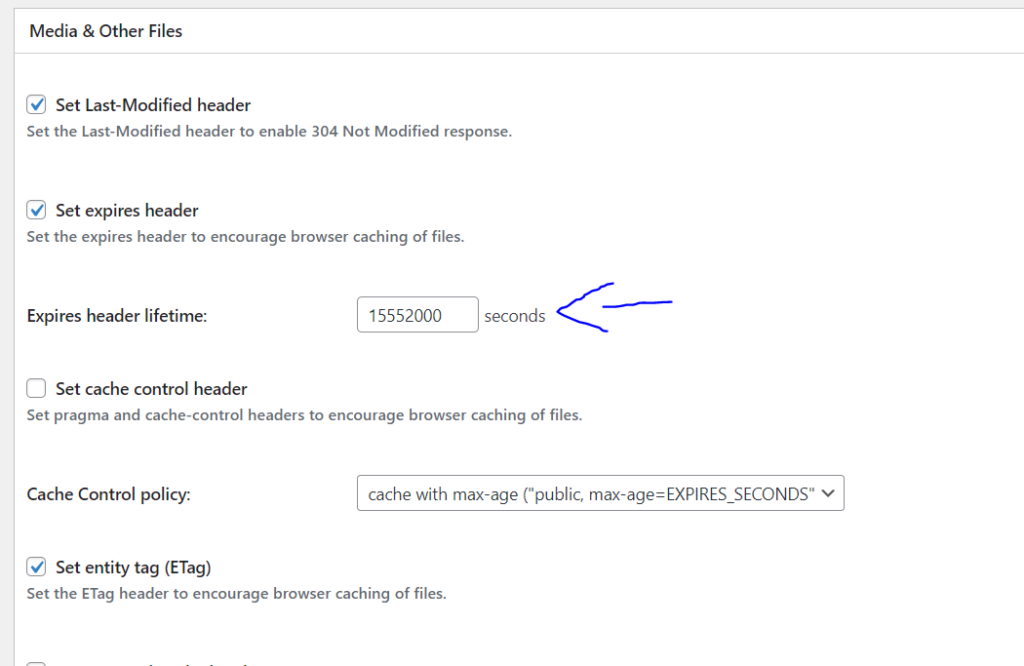

- Click on Performance -> Browser Cache from the left hand side menu

- Scroll down to “Media and Other Files”. Change the “Expires Header Lifetime” to at least 15552000s (180 days).

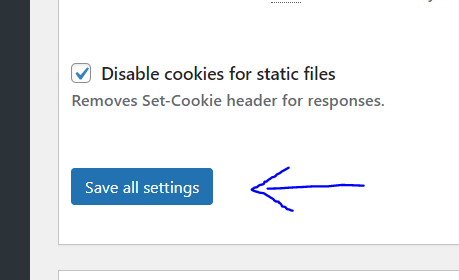

- Click on “Save all settings”

Conclusion

When you provide static assets with an efficient cache strategy, the user’s browser will save these files locally, reducing the amount of time it takes for the page to load. All of a page’s resources, like as HTML, CSS, JavaScript, and pictures, must be downloaded as soon as it is loaded.

4 Responses